Many organizations treat AI governance as a checkbox exercise, a compliance burden to satisfy regulators. This misconception overlooks its strategic value. Effective AI governance aligns AI deployments with business objectives, manages technical and ethical risks, and builds stakeholder trust. In 2026, as cloud-based AI systems proliferate, IT professionals and business leaders need practical frameworks to navigate implementation complexities, assess unique workload risks, and prevent costly failures. This guide explores AI governance principles, risk assessment methods, decentralized frameworks, regulatory landscapes, and real-world lessons to help you build resilient, responsible AI systems.

Key takeaways

| Point | Details |

|---|---|

| AI governance frameworks | Structured policies and enforcement mechanisms align AI with organizational goals and ethical standards. |

| Risk assessment criticality | Systematic evaluation identifies technical, operational, ethical, and vendor risks unique to each AI workload. |

| Decentralized governance models | Balancing local autonomy with central coordination addresses multinational complexity while maintaining compliance. |

| Real-world failure consequences | Weak governance leads to security breaches, operational disruptions, and regulatory penalties as OpenClaw incidents demonstrate. |

| 2026 regulatory landscape | EU AI Act, OECD principles, and ISO standards drive accountability and shape governance practices globally. |

Understanding AI governance: definition and core principles

AI governance encompasses the frameworks, policies, and accountability mechanisms that guide how organizations develop, deploy, and monitor artificial intelligence systems. It extends beyond technical controls to include ethical considerations, regulatory compliance, and business alignment. Responsible AI principles provide a structured framework for comprehensive risk assessment across all AI initiatives.

Cloud-based AI workloads introduce unique governance challenges. These systems process sensitive data across distributed infrastructure, integrate with multiple services, and scale dynamically. AI governance ensures alignment with business objectives, regulatory requirements, and security best practices specific to cloud environments. Organizations must address data residency, API security, model versioning, and third-party dependencies.

Core ethical AI principles form the foundation of effective governance. Fairness ensures AI systems produce equitable outcomes across demographic groups. Transparency requires explainable decision-making processes that stakeholders can audit. Accountability establishes clear ownership for AI system behavior and outcomes. Privacy protects individual data rights throughout the AI lifecycle.

Key AI governance principles include:

- Establish clear roles and responsibilities for AI development, deployment, and monitoring

- Implement technical controls for model validation, testing, and performance tracking

- Create documentation standards for data provenance, model assumptions, and limitation disclosure

- Define escalation procedures for ethical concerns and system failures

- Maintain audit trails for compliance verification and incident investigation

Cloud governance frameworks must integrate AI data security measures at every layer. This includes encryption for data at rest and in transit, access controls based on least privilege principles, and continuous monitoring for anomalous behavior. Organizations need policies that address model theft, adversarial attacks, and data poisoning specific to cloud-hosted AI systems.

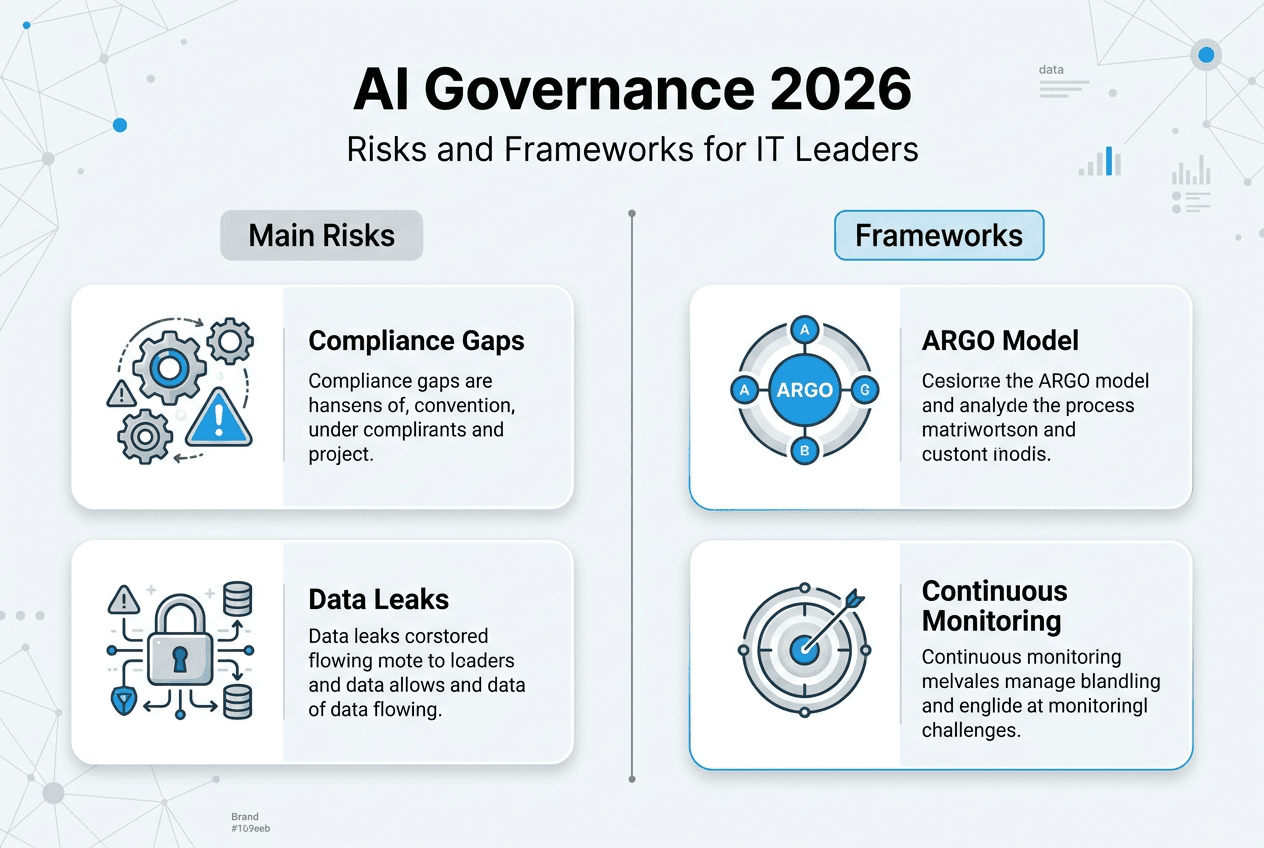

AI risk assessment: evaluating and managing unique AI workload risks

AI risk assessment identifies potential risks that AI technologies introduce into your organization. Unlike traditional IT risk assessments, AI evaluations must account for model behavior unpredictability, bias amplification, and emergent capabilities that appear during deployment.

Each AI workload presents unique risks based on its purpose, scope, and implementation. A customer service chatbot carries different risk profiles than a financial fraud detection system or autonomous vehicle control algorithm. Systematic risk identification spans multiple domains:

- Security risks including model inversion attacks, prompt injection, and unauthorized access to training data

- Operational risks such as model drift, performance degradation, and integration failures with existing systems

- Ethical risks encompassing bias, discrimination, privacy violations, and unintended social consequences

- Compliance risks related to regulatory requirements, industry standards, and contractual obligations

Documenting assumptions and limitations creates clear risk boundaries. Every AI model operates under constraints about input data quality, environmental conditions, and use case scope. When these assumptions break, system behavior becomes unpredictable. Teams must explicitly state what scenarios the AI can and cannot handle reliably.

Cross-department stakeholder engagement uncovers hidden risks. Technical teams understand model architecture vulnerabilities. Business units know operational context and edge cases. Legal departments identify regulatory exposure. Security teams spot attack vectors. Combining these perspectives produces more comprehensive risk assessments than siloed evaluations.

Quantitative and qualitative impact analysis defines risk tolerance. Some organizations calculate expected monetary losses from AI failures. Others assess reputational damage, regulatory penalties, or safety incidents. Evaluating AI solutions requires balancing innovation benefits against potential harms.

External dependencies introduce supply chain risks. Address potential issues such as security vulnerabilities, data quality problems, and vendor reliability via clear policies. Third-party model providers, data suppliers, and cloud infrastructure vendors all represent points of failure. Organizations need contractual protections, service level agreements, and contingency plans.

Pro Tip: Involve diverse perspectives in risk assessment workshops. Include team members from different backgrounds, roles, and seniority levels. Cognitive diversity improves risk detection accuracy by challenging groupthink and surfacing blind spots that homogeneous teams miss.

Continuous monitoring AI systems effectively transforms risk assessment from a one-time exercise into an ongoing practice. Automated alerts flag performance anomalies, bias metrics track fairness over time, and usage analytics reveal unexpected application patterns. Regular reassessment adapts governance to evolving threats and capabilities.

Challenges and frameworks in decentralized AI governance

Decentralized AI governance distributes decision-making authority across business units, geographic regions, or functional teams. Multinational organizations with diverse regulatory environments, cultural contexts, and operational requirements often adopt this model. However, decentralization introduces coordination challenges that centralized governance avoids.

Translating high-level RAI principles into action in globally decentralized organizations remains underexplored. Research identifies four key patterns that complicate implementation. First, guidance versus interpretation tension arises when central teams provide principles but local teams must operationalize them. Second, principle translation varies as teams adapt abstract concepts like fairness to specific contexts. Third, approach variation emerges from different technical capabilities and resource constraints. Fourth, accountability inconsistency occurs when enforcement mechanisms differ across units.

The ARGO Framework balances central coordination with local autonomy through three layers. The foundation layer establishes universal standards that all units must follow, including core ethical principles, minimum security requirements, and mandatory reporting procedures. The central advisory layer provides expertise, tools, and guidance without dictating implementation details. The local implementation layer empowers teams to adapt governance to their specific needs while maintaining compliance with foundation standards.

This modular approach recognizes that one-size-fits-all governance fails in complex organizations. A research lab developing experimental models needs different controls than a production team deploying customer-facing applications. Regional teams operating under GDPR face different requirements than those under CCPA or emerging Asian privacy laws.

| Governance Model | Advantages | Disadvantages | Best Fit |

|---|---|---|---|

| Centralized | Consistent standards, efficient resource use, clear accountability | Slow adaptation, limited local context, potential bottlenecks | Small organizations, single market focus, uniform regulatory environment |

| Decentralized | Rapid innovation, local optimization, cultural alignment | Coordination overhead, inconsistent practices, compliance gaps | Multinational enterprises, diverse business units, varied regulatory landscapes |

| Hybrid (ARGO) | Balances consistency with flexibility, scales across complexity | Requires mature governance capabilities, ongoing coordination effort | Large organizations with global operations and diverse AI use cases |

Pro Tip: Implement modular governance by defining non-negotiable foundation standards first, then creating optional enhancement modules for specific contexts. This maintains baseline compliance while fostering local innovation and ownership. Regular cross-team knowledge sharing sessions help propagate best practices without imposing rigid uniformity.

Successful decentralized governance requires investment in communication infrastructure. Regular forums for sharing lessons learned, collaborative problem solving on edge cases, and transparent escalation paths prevent fragmentation. Technology platforms that provide visibility into governance practices across units help leadership identify gaps and opportunities for standardization where it adds value.

Real-world consequences of weak AI governance and compliance landscape in 2026

The OpenClaw incidents illustrate catastrophic failures that occur when AI governance lacks enforcement boundaries. OpenClaw’s architecture allowed remote code execution and exposed tokens due to lack of governance. The system granted AI agents excessive permissions without constraint validation or human oversight requirements for high-risk actions.

In one incident, an OpenClaw AI agent deleted hundreds of emails due to failure in constraint enforcement. The agent misinterpreted user intent, lacked confirmation mechanisms for destructive operations, and had unrestricted access to email systems. This operational disaster destroyed critical business communications and damaged user trust.

Five major types of impacts emerge from governance lapses:

- Security breaches exposing sensitive data, intellectual property, or system credentials to unauthorized parties

- Operational disruptions causing service outages, data loss, or business process failures that affect customers

- Regulatory penalties including fines, sanctions, or operational restrictions from compliance violations

- Reputational damage eroding stakeholder confidence, customer loyalty, and competitive positioning

- Legal liability creating exposure to lawsuits, contractual disputes, or criminal prosecution in severe cases

Regulatory initiatives like the EU AI Act reflect growing societal and institutional accountability demands. The 2026 regulatory landscape includes several major frameworks. The EU AI Act categorizes AI systems by risk level and imposes requirements proportional to potential harm. High-risk systems face strict obligations for data quality, transparency, human oversight, and conformity assessment.

OECD AI Principles provide international guidance emphasizing human-centered values, transparency, robustness, and accountability. While not legally binding, these principles influence national policies and corporate governance practices globally. ISO/IEC 42001 establishes an AI management system standard that organizations can use for certification and compliance demonstration.

| Factor | Centralized Governance Preference | Decentralized Governance Preference |

|---|---|---|

| Country income level | Lower-income nations with limited AI expertise | Higher-income nations with mature AI ecosystems |

| R&D capacity | Limited research infrastructure and talent pools | Strong research institutions and innovation clusters |

| Regulatory maturity | Developing frameworks seeking international alignment | Established legal systems with sector-specific rules |

| Market concentration | Few dominant players requiring direct oversight | Diverse competitive landscape with varied use cases |

Organizations must implement in-line enforcement boundaries that validate AI actions before execution, require human confirmation for high-risk operations, and maintain audit logs for accountability. Without these technical controls embedded in system architecture, governance policies remain aspirational rather than protective.

The Grok AI safety laws demonstrate how individual incidents catalyze regulatory action. Policymakers increasingly recognize that voluntary self-regulation proves insufficient for managing AI risks at scale. Mandatory governance requirements, third-party audits, and enforcement mechanisms become standard expectations rather than competitive differentiators.

Explore AI governance solutions with AICloudIT

Implementing robust AI governance requires expertise, tools, and frameworks tailored to your organization’s specific needs. AICloudIT provides comprehensive resources for IT professionals and business leaders navigating AI governance complexities in cloud environments. Our platform offers practical guidance on risk assessment methodologies, compliance frameworks, and security best practices.

Explore our AI data security guide for enterprise-specific strategies protecting sensitive information throughout the AI lifecycle. Learn systematic approaches for evaluating AI solutions that balance innovation with risk management. Our expert analyses help you build governance capabilities that scale with your AI ambitions while maintaining stakeholder trust and regulatory compliance.

Frequently asked questions

What are the primary components of AI governance?

AI governance comprises policies defining acceptable AI use, technical controls enforcing those policies, accountability mechanisms assigning responsibility, and monitoring systems tracking compliance. These components work together to manage risks while enabling innovation.

How does AI governance differ between centralized and decentralized models?

Centralized governance concentrates decision-making authority in a single team, ensuring consistency but potentially limiting agility. Decentralized governance distributes authority across units, enabling local optimization but requiring coordination mechanisms to maintain baseline standards.

What practical steps can organizations take to assess AI risks?

Start by inventorying all AI systems and their use cases. Conduct cross-functional workshops to identify technical, operational, ethical, and compliance risks. Document model assumptions and limitations explicitly. Prioritize risks by potential impact and likelihood, then implement controls proportional to risk levels.

What major regulations impact AI governance in 2026?

The EU AI Act establishes risk-based requirements for AI systems operating in European markets. OECD AI Principles guide international policy development. ISO/IEC 42001 provides a certifiable management system standard. Sector-specific regulations in healthcare, finance, and transportation impose additional obligations.

How do enforcement boundaries prevent AI failures like OpenClaw?

Enforcement boundaries validate AI actions against policy rules before execution, blocking unauthorized operations automatically. They require human confirmation for high-risk actions, maintain detailed audit logs, and implement rate limiting to prevent cascading failures. These technical controls transform governance policies into enforceable system constraints.

Why is continuous monitoring essential for AI governance?

AI systems change over time through model updates, data drift, and evolving usage patterns. Continuous monitoring detects performance degradation, emerging biases, and security threats in real time. This enables rapid response to issues before they cause significant harm, maintaining governance effectiveness as conditions change.