Many IT professionals believe cloud computing requires mastering hundreds of complex services before building anything useful. This misconception prevents talented architects from leveraging cloud platforms effectively. In reality, understanding a handful of foundational principles enables you to design reliable, high-performance systems that scale with your organization’s needs. This guide clarifies core cloud computing concepts aligned with the AWS Well-Architected Framework, focusing on reliability and performance efficiency pillars that directly impact your architectural decisions and technical outcomes.

Key takeaways

| Point | Details |

|---|---|

| Reliability foundations | Monitoring service quotas and designing for network availability prevents unexpected outages |

| Performance optimization | Balancing cost, architecture patterns, and benchmarking creates scalable cloud solutions |

| Architectural trade-offs | Every design decision impacts customer experience, system efficiency, and operational costs |

| Continuous improvement | Iterative refinement guided by metrics and feedback drives better cloud outcomes |

Understanding cloud computing fundamentals

Cloud computing delivers IT resources over the internet on a pay-as-you-go basis, eliminating the need for physical infrastructure management. The three primary service models are Infrastructure as a Service (IaaS), which provides virtualized computing resources; Platform as a Service (PaaS), offering development and deployment environments; and Software as a Service (SaaS), delivering complete applications. Understanding these models helps you select the right abstraction level for your workloads.

Deployment models define where and how cloud resources operate. Public clouds run on shared infrastructure managed by providers like AWS, Azure, or Google Cloud. Private clouds dedicate resources to a single organization, offering greater control. Hybrid clouds combine both approaches, letting you keep sensitive data on-premises while leveraging public cloud scalability for other workloads. Each model presents distinct trade-offs in cost, control, and complexity.

The AWS Well-Architected Framework provides structured guidance across six pillars: operational excellence, security, reliability, performance efficiency, cost optimization, and sustainability. For IT professionals starting their cloud journey, reliability and performance efficiency deserve immediate attention. The Performance Efficiency Pillar guides architecture selection, resource allocation, and continuous optimization practices.

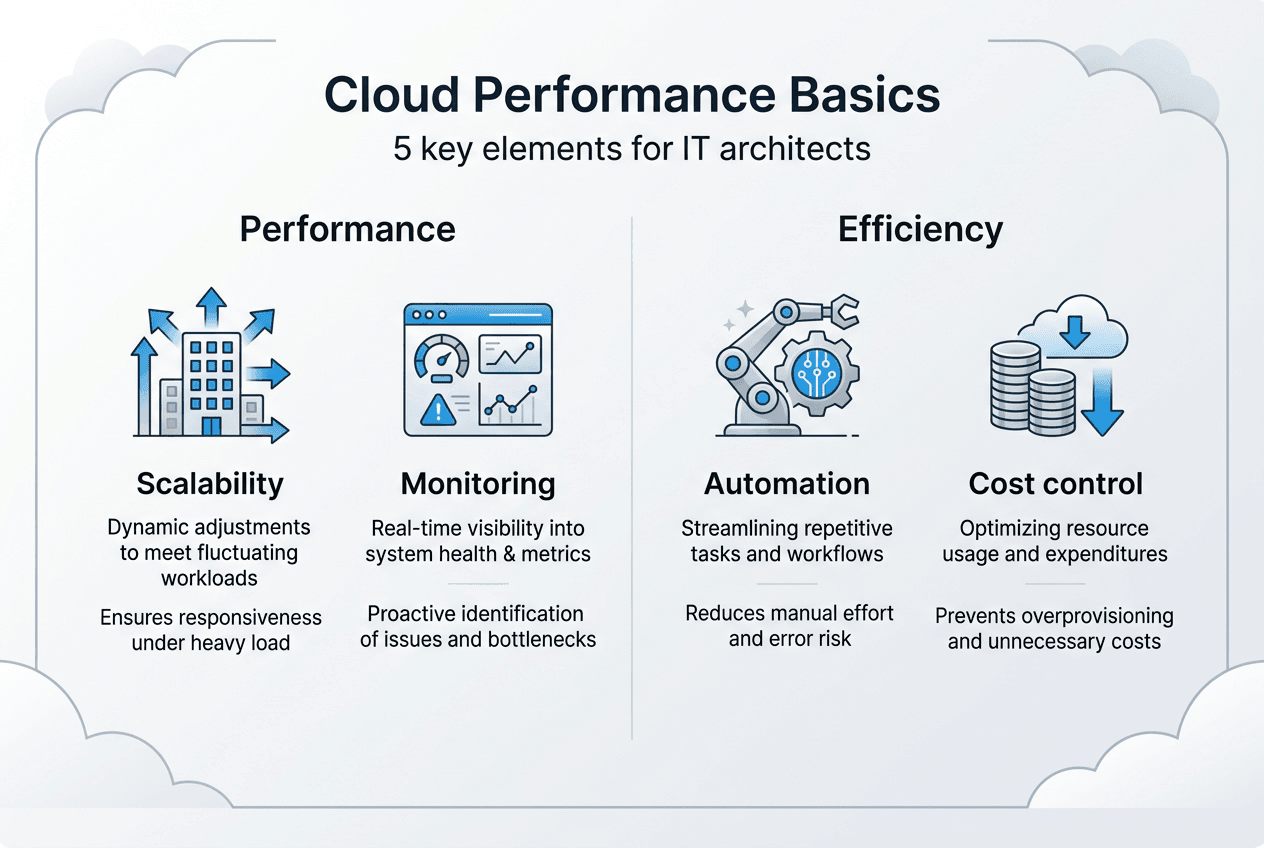

Four foundational concepts underpin effective cloud architecture:

- Scalability: The ability to handle increased workload by adding resources

- Elasticity: Automatic scaling in response to demand fluctuations

- Availability: The percentage of time systems remain operational and accessible

- Fault tolerance: Continuing operation despite component failures

Architectural design patterns translate these concepts into practical implementations. Microservices architectures decompose applications into independent services that scale separately. Event-driven patterns decouple components using asynchronous messaging. Serverless architectures eliminate infrastructure management entirely, charging only for actual compute time. Selecting appropriate patterns requires understanding your workload characteristics, team capabilities, and business requirements. Exploring cloud architecture types and diagrams provides visual frameworks for common design approaches.

Pro Tip: Start with managed services that abstract infrastructure complexity. Services like AWS RDS for databases or AWS Lambda for compute let you focus on application logic while the provider handles scaling, patching, and availability.

Building reliable cloud systems: principles and best practices

Reliability in cloud computing means systems perform their intended functions correctly and consistently under expected conditions. The Reliability Pillar provides a structured approach to designing and operating reliable systems in the cloud, focusing on five key areas: foundations, workload architecture, change management, failure management, and testing.

Service quotas represent hard limits on resources you can provision in cloud accounts. Every AWS service has default quotas for API requests, concurrent executions, or resource counts. Exceeding these quotas causes immediate service disruptions. Monitor service quotas proactively using CloudWatch metrics and automated alerts. Request quota increases before reaching limits, not after outages occur. This simple practice prevents 80% of quota-related incidents.

Network connectivity represents a critical single point of failure in cloud architectures. Designing for high availability in network connectivity eliminates this risk through redundancy and geographic distribution. Deploy resources across multiple Availability Zones within a region. Each zone operates on independent infrastructure with separate power and networking. If one zone fails, your application continues serving traffic from remaining zones without interruption.

Disaster recovery strategies protect against region-wide failures or data corruption. Multi-region architectures replicate critical data and infrastructure across geographically separated regions. Recovery Time Objective (RTO) defines how quickly you must restore service, while Recovery Point Objective (RPO) specifies acceptable data loss measured in time. These metrics drive architecture decisions. Learning about AWS multi-region architecture for disaster recovery demonstrates practical implementation patterns.

Implement these reliability best practices systematically:

- Establish monitoring and alerting for all critical metrics before deploying to production

- Automate recovery procedures using infrastructure as code and automated failover mechanisms

- Test failure scenarios regularly through chaos engineering and disaster recovery drills

- Document runbooks detailing response procedures for common failure modes

- Implement circuit breakers and retry logic with exponential backoff in application code

- Use health checks and automatic replacement for failed instances

Pro Tip: Track your service quota utilization as a percentage rather than absolute numbers. Set alerts at 70% utilization to give yourself time for quota increase requests. This buffer prevents emergency situations and allows planned capacity expansion.

Reliability forms the foundation of customer trust. Systems that fail unpredictably erode confidence faster than any feature can rebuild it. Invest in reliability from day one, not after your first major outage.

Exploring AWS reliability best practices provides additional implementation guidance and real-world examples from experienced practitioners.

Optimizing cloud performance and efficiency

Performance efficiency focuses on using computing resources effectively to meet requirements while adapting to changing demands and evolving technologies. The Performance Efficiency Pillar outlines best practices for architecture selection, benchmarking, and cost consideration that directly impact system responsiveness and operational expenses.

Traditional architectures and cloud-optimized patterns differ fundamentally in their approach to resource utilization and scaling. This comparison illustrates key distinctions:

| Architecture Characteristic | Traditional Approach | Cloud-Optimized Approach | Cost Impact |

|---|---|---|---|

| Resource provisioning | Fixed capacity for peak load | Elastic scaling matching demand | 60-80% reduction in idle resources |

| Database design | Monolithic relational database | Polyglot persistence using purpose-built databases | 40% improvement in query performance |

| Compute model | Long-running servers | Serverless functions and containers | Pay only for actual execution time |

| Caching strategy | Application-level only | Multi-tier caching including CDN | 70% reduction in origin requests |

Five best practices drive performance efficiency in cloud environments. First, learn about available services and technologies continuously. Cloud providers release new capabilities monthly. Services you dismissed last year might now solve current challenges more effectively. Second, factor cost into architectural decisions from the start. The cheapest service that meets requirements beats over-engineered solutions. Third, use policies and reference architectures to guide teams toward proven patterns. Fourth, benchmark everything. Assumptions about performance rarely match reality. Fifth, make data-driven trade-offs that balance customer impact against operational complexity.

Architectural decisions always involve trade-offs. Adding caching layers improves response times but introduces complexity in cache invalidation. Serverless architectures reduce operational overhead but may increase latency for cold starts. Multi-region deployments enhance availability but complicate data consistency. Evaluate these trade-offs by measuring customer impact quantitatively. A 100ms latency improvement that costs $50,000 annually makes sense if it prevents customer churn worth $200,000.

AI tools increasingly assist with cloud performance monitoring and optimization. Machine learning models detect anomalies in metrics that humans miss. Predictive scaling adjusts resources before demand spikes occur. Automated recommendations identify cost optimization opportunities across thousands of resources. Understanding AI in cloud performance management reveals how intelligent automation enhances operational efficiency.

Avoid these common performance pitfalls:

- Selecting instance types based on familiarity rather than workload requirements

- Neglecting to enable compression for data transfer and storage

- Running databases on general-purpose instances instead of memory-optimized types

- Failing to implement connection pooling for database access

- Ignoring regional data transfer costs when designing multi-region architectures

- Using synchronous processing where asynchronous patterns would improve throughput

Reviewing cloud architecture overview materials helps you recognize performance anti-patterns before they impact production systems.

Implementing cloud architecture best practices for IT professionals

Applying cloud computing fundamentals requires systematic learning and iterative improvement. Start by thoroughly reviewing provider documentation. AWS, Azure, and Google Cloud publish comprehensive guides, reference architectures, and best practice documents. These resources reflect years of experience across millions of customer workloads. Treat them as your primary curriculum, not marketing materials.

Key AWS best practices translate directly into architectural decisions and operational procedures:

| Practice Code | Description | Impact Area |

|---|---|---|

| REL01-BP01 | Monitor service quotas and usage | Prevents service disruptions from quota limits |

| REL02-BP01 | Design highly available network connectivity | Eliminates single points of failure |

| PERF01-BP01 | Learn about available services | Enables optimal technology selection |

| PERF02-BP01 | Factor cost into architecture selection | Balances performance against budget constraints |

| PERF04-BP01 | Use benchmarking to drive improvement | Provides objective performance measurement |

Continuous monitoring forms the backbone of operational excellence. Implement comprehensive observability covering metrics, logs, and traces. Metrics reveal what is happening in your systems. Logs explain why events occurred. Traces show how requests flow through distributed components. Together, these three pillars enable rapid troubleshooting and proactive optimization.

Cost optimization requires ongoing attention, not one-time cleanup efforts. Review your cloud spending weekly, identifying resources that no longer serve business purposes. Rightsize instances based on actual utilization patterns. Reserve capacity for predictable workloads. Use spot instances for fault-tolerant batch processing. These practices compound over time, reducing costs by 40-60% without sacrificing performance.

Architectural trade-offs demand quantitative evaluation. Create a simple framework scoring each option across relevant dimensions: cost, performance, reliability, security, and operational complexity. Weight these factors according to business priorities. A startup prioritizes speed and cost over enterprise-grade reliability. A healthcare provider inverts those priorities due to regulatory requirements. Your framework should reflect your specific context.

Pro Tip: Integrate AI-driven monitoring tools that learn normal behavior patterns and alert on genuine anomalies rather than static thresholds. This reduces alert fatigue while catching subtle issues that indicate emerging problems. Exploring monitoring AI systems best practices demonstrates effective implementation approaches.

Iterative architecture improvement follows a continuous cycle. Establish baseline performance metrics before making changes. Implement one modification at a time. Measure the impact. If results improve, keep the change. If not, roll back and try a different approach. This disciplined methodology prevents the common mistake of changing multiple variables simultaneously, making it impossible to determine what actually helped.

Benchmarking drives objective decision-making. Test different instance types, database configurations, and architectural patterns under realistic load conditions. Synthetic benchmarks provide quick comparisons but often mislead. Production-like workloads reveal actual performance characteristics. Leveraging benchmarking to drive architecture improvements ensures changes deliver measurable value.

Customer impact evaluation connects technical decisions to business outcomes. Track user-facing metrics like page load time, transaction success rate, and error frequency. Correlate infrastructure changes with these business metrics. A database migration that reduces query latency by 50ms means nothing if checkout completion rates remain unchanged. Focus optimization efforts where they improve customer experience measurably.

Reviewing the cloud computing setup guide provides step-by-step implementation guidance for IT professionals establishing cloud environments.

Explore AICloudIT’s cloud solutions and resources

Mastering cloud computing basics opens doors to designing robust, efficient systems that scale with your organization. AICloudIT serves as your knowledge hub for cloud architecture, AI integration, and emerging technology trends. Our platform delivers practical insights from experienced practitioners who have built production systems serving millions of users.

Explore AICloudIT cloud computing solutions to discover tools and services that accelerate your cloud journey. Whether you’re migrating legacy applications, optimizing existing infrastructure, or designing greenfield architectures, our resources provide actionable guidance grounded in real-world experience.

Continue your learning through our comprehensive blog covering cloud architecture insights, performance optimization techniques, and cost management strategies. Each article addresses specific challenges IT professionals face, offering solutions you can implement immediately.

Frequently asked questions about cloud computing basics

What are the main benefits of cloud computing for IT professionals?

Cloud computing eliminates infrastructure management overhead, letting you focus on application development and business logic. You gain access to enterprise-grade services like managed databases, machine learning platforms, and global content delivery networks without capital investment. Pay-as-you-go pricing converts fixed costs into variable expenses that scale with usage.

How do service quotas impact cloud reliability?

Service quotas represent hard limits on resources you can provision in your cloud account. Exceeding quotas causes immediate service failures, rejecting new requests until you reduce usage or increase limits. Proactive quota monitoring with alerts at 70% utilization prevents these disruptions by giving you time to request increases before hitting limits.

What is the role of benchmarking in cloud performance optimization?

Benchmarking provides objective measurements comparing different architectural approaches under realistic conditions. Testing various instance types, database configurations, or caching strategies reveals actual performance characteristics rather than theoretical capabilities. This data-driven approach eliminates guesswork, ensuring optimization efforts deliver measurable improvements in response time, throughput, or cost efficiency.

How can AI improve cloud system monitoring?

AI-powered monitoring tools learn normal behavior patterns across thousands of metrics, detecting anomalies that static thresholds miss. Machine learning models predict resource needs before demand spikes occur, enabling proactive scaling. Automated analysis identifies cost optimization opportunities and security risks across complex environments faster than manual review. These capabilities reduce operational overhead while improving system reliability.

What are common challenges when starting with cloud architectures?

IT professionals often struggle with the overwhelming number of available services, making it difficult to select appropriate tools for specific workloads. Understanding pricing models and predicting costs accurately requires experience most newcomers lack. Designing for cloud-native patterns like eventual consistency and stateless compute differs fundamentally from traditional architectures. Starting with managed services and reference architectures mitigates these challenges, providing proven patterns you can adapt to your requirements.